Entropy/IP: report for dataset C2

(Client IPv6 Addresses)

(How did we find this? Click to show the full report)

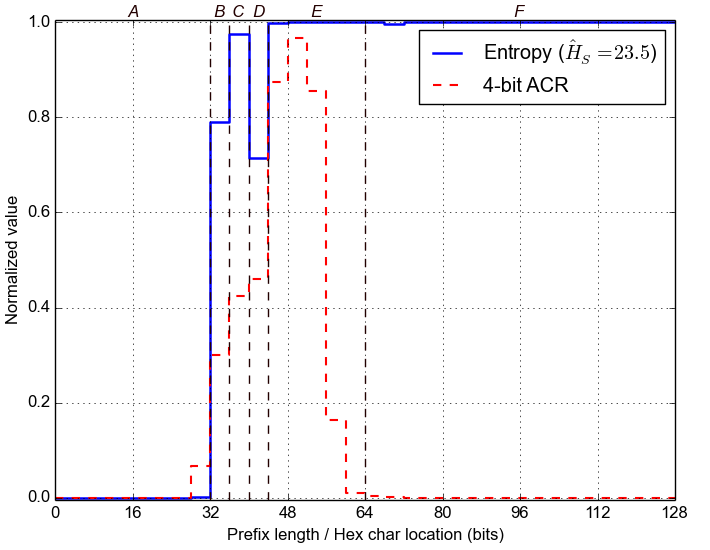

Entropy vs. 4-bit Aggregate Count Ratio (ACR)

First, we estimate the Entropy for each nybble in the IPv6 addresses, across the whole dataset. For example, if the last nybble is highly variable, then the corresponding Entropy will be high. Conversely, the Entropy will be zero for nybbles that stay constant across the dataset. Below we plot the normalized value of Entropy for each of the 32 nybbles, along with the 4-bit Aggregate Count Ratio, which was introduced in Plonka and Berger, 2015.

Second, we group adjacent nybbles with similar Entropy to form larger segments, with the expectation that they represent semantically different parts of each address. We label these segments with letters and mark them with dashed lines in the plot below.

Segment Mining

Next, we search the segments for the most popular values and ranges of values within them. For that purpose, we use statistical methods for detecting outliers and the DBSCAN machine learning algorithm. We analyze distribution and frequencies of values inside address segments.

Below we present the results, with ranges of values shown as two values in italics (bottom to top). The last column gives the relative frequency across the whole dataset. The /32 prefixes are anonymized.

A: bits 0-32 (hex chars 1- 8)B: bits 32-36 (hex chars 9- 9)

- 20010db8 99.94%

C: bits 36-40 (hex chars 10-10)

- 9 17.53%

- 5 16.42%

- 6 13.36%

- b 10.45%

- 8 9.20%

- 4 9.08%

- * 0-c 23.97%

D: bits 40-44 (hex chars 11-11)

- 0 11.19%

- 5 8.55%

- 4 8.50%

- d 8.27%

- 9 7.71%

- * 1-f 55.77%

E: bits 44-64 (hex chars 12-16)

- 7 14.32%

- 4 14.23%

- 1 14.21%

- 3 14.19%

- 5 14.10%

- 6 14.03%

- 2 13.97%

- 0 0.96%

F: bits 64-128 (hex chars 17-32)

- e7dfd 0.01%

- 43da8 0.01%

- 1c9cc 0.01%

- fc8c2 0.01%

- 1983c 0.01%

- 6756a 0.01%

- c7055 0.01%

- bcee9 0.01%

- 73f59 0.01%

- * 0000b-fffe7 99.94%

- * 01019cae50773420-ffffce7f38c6a800 98.80%

- * 0000000000000015-00ffdee479b6c820 1.17%

Bayesian Network Structure

Next, we search for statistical dependencies between the segments. For that purpose, we train a Bayesian Network (BN) from data.

Below we show structure of the corresponding BN model. Arrows indicate direct statistical influence. Note that directly connected segments can probabilistically influence each other in both directions (upstream / downstream). Under some conditions, segments without direct connection can still influence each other through other segments: e.g., A can influence C through B if C depends on B and B depends on A (even if there is no direct arrow between A and C).

Learning BN structure from data is in general a challenging optimization problem. Hence, there might be more than one possible BN structure graph for the same dataset.

Conditional Probability Browser

Finally, below we show an interactive browser that decomposes IPv6 addresses into segments, values, ranges, and their corresponding probabilities. The browser lets for exploring the underlying BN model and see how certain segment values probabilistically influence the other segments.

Try clicking on the colored boxes below. You should see the colors changing, which reflects the fact that some segment values can make the other values more (or less) likely. For instance, in the Sample Report, you may find that clicking on J1 (i.e., the first value in segment J) makes segments C, D, F, H, and I largely predictable (see our paper for more examples).

You may condition the model on many segment values. Clicking on selected values un-selects them. Clicking on the red "Clear" above the color map un-selects them all. Below the browser we show the estimated proportion of the addresses matching your selection (vs. the dataset). If the browser cannot estimate the probabilities in a reasonable time, it asks before trying harder.

Candidate Target Addresses

Using the BN model, below we generate a few candidate target IPv6 addresses matching the selection above. Note that we anonymize the IPv6 addresses in this report.

As we show in the paper, this technique allowed us to successfully scan IPv6 networks of servers and routers, and to predict the IPv6 network identifiers of active client IPv6 addresses.